If you’ve ever yelled at Siri, waited forever for Alexa to catch up, or felt like ChatGPT’s voice mode was just reading lines off a script, you’re going to love this. I’ve spent the last few weeks diving deep into Sesame AI, and honestly? It feels like the first time a voice assistant actually gets me. This Sesame AI complete guide covers every angle—what is Sesame AI, how the tech works, real demos, open-source tools, and what’s coming next—so you can decide if it’s worth your time in 2026.

Whether you’re a developer itching to run Sesame CSM locally, a creator hunting for the next big sesame ai voice demo, or just someone tired of robotic assistants, you’re in the right place. Let’s break it all down like we’re chatting over coffee.

Try the public research preview yourself: Sesame AI

What Is Sesame AI? The Story Behind Brendan Iribe’s Next Big Bet

What is Sesame AI? In simple terms, it’s a San Francisco startup building lifelike voice companions that feel like talking to a real friend. Founded in 2023 by Oculus veterans, the company is laser-focused on “voice presence”—that magical feeling when an AI doesn’t just answer you but actually connects.

The driving force? Brendan Iribe Sesame AI connection runs deep. As the co-founder and former CEO of Oculus (the VR company Facebook bought for $2 billion), Iribe knows hardware and immersive experiences better than almost anyone. He teamed up with Ankit Kumar (ex-Ubiquity6 CTO) and other Sesame AI Oculus vets like Nate Mitchell and Ryan Brown to create something bigger than VR: AI that sees, hears, and talks naturally.

They call the company simply Sesame—easy to remember, right? And from day one, the mission has been clear: make computers feel alive.

Read More: The Complete Guide to AI Workflow Automation

Sesame AI Explained: Meet the Conversational Speech Model (CSM)

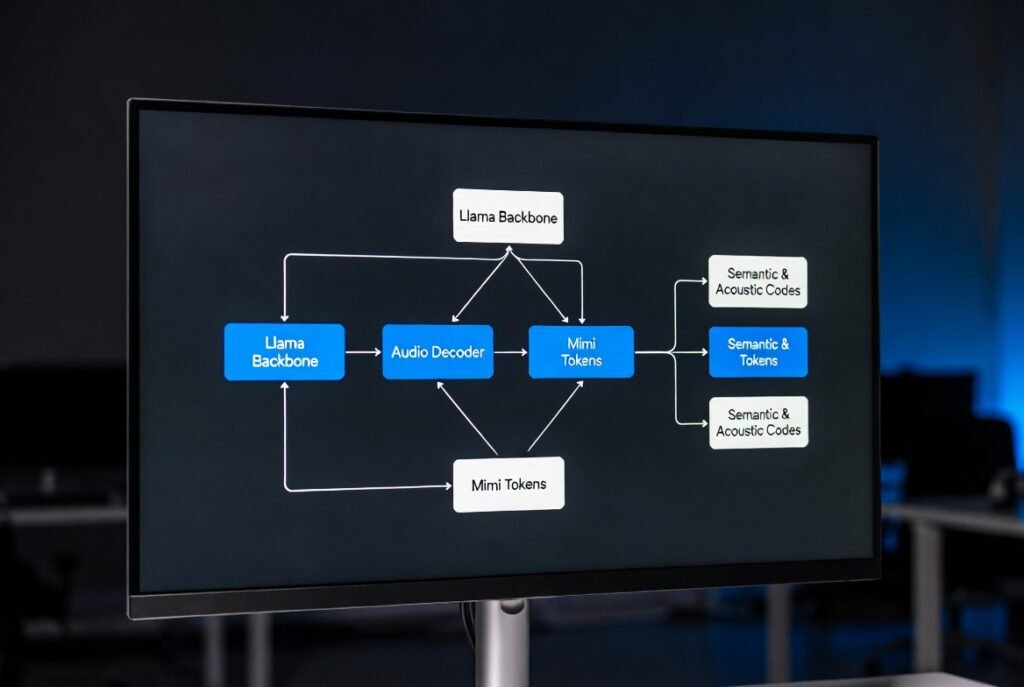

At the heart of everything is the Sesame Conversational Speech Model, or Sesame CSM model for short. Unlike old-school text-to-speech that just reads words, Sesame AI voice model generates speech end-to-end. It listens to conversation history, picks up on tone, and responds with natural pauses, laughs, breaths, and emotion.

Trained on roughly one million hours of real English audio, the model uses a Llama-style backbone plus a tiny audio decoder. The 1B version (CSM-1B) was open-sourced in March 2025 under Apache 2.0, which is huge news for anyone who hates locked-down tech.

This is why Sesame AI explained feels like a breakthrough. It doesn’t just sound good—it thinks while it speaks.

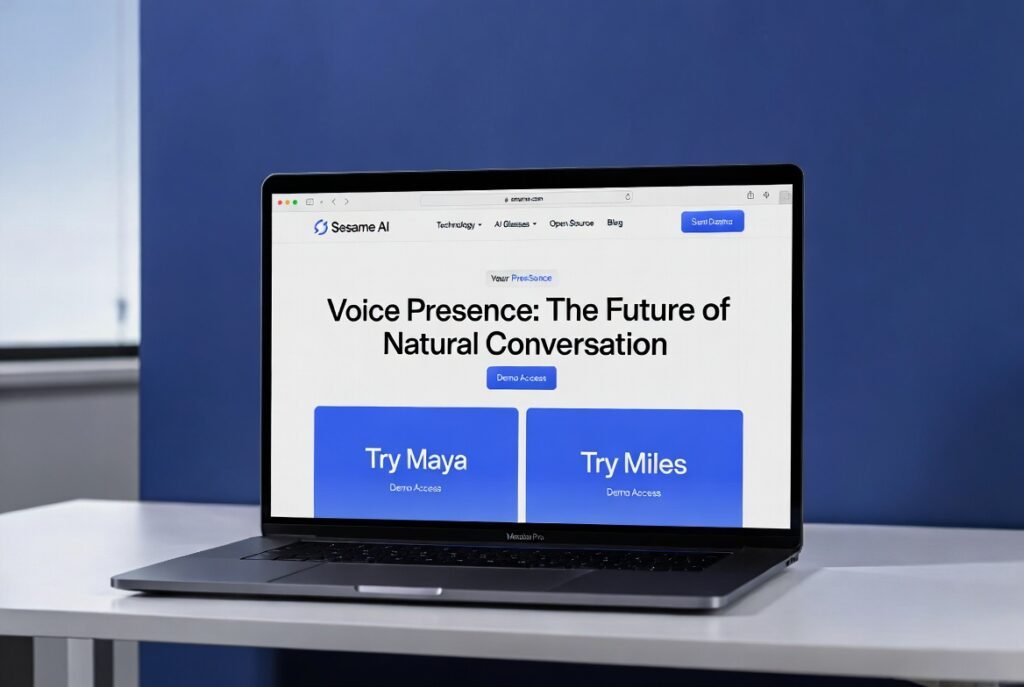

Try It Yourself: Sesame AI Voice Demo with Maya and Miles

The best way to understand the hype is to hear it. Head to app.sesame.com for the free Sesame AI voice demo. You’ll meet Maya and Miles—two voices that went viral the moment they launched in February 2025. Over a million people jumped in and racked up five million minutes of conversation in weeks.

I asked Maya to spin a Dungeons & Dragons story and then insert herself as a quirky gnome engineer. She didn’t miss a beat. The pacing, the little chuckles, the way she remembered details from two minutes earlier—it felt real. If you’re searching for Sesame AI Maya Miles, this is the spot.

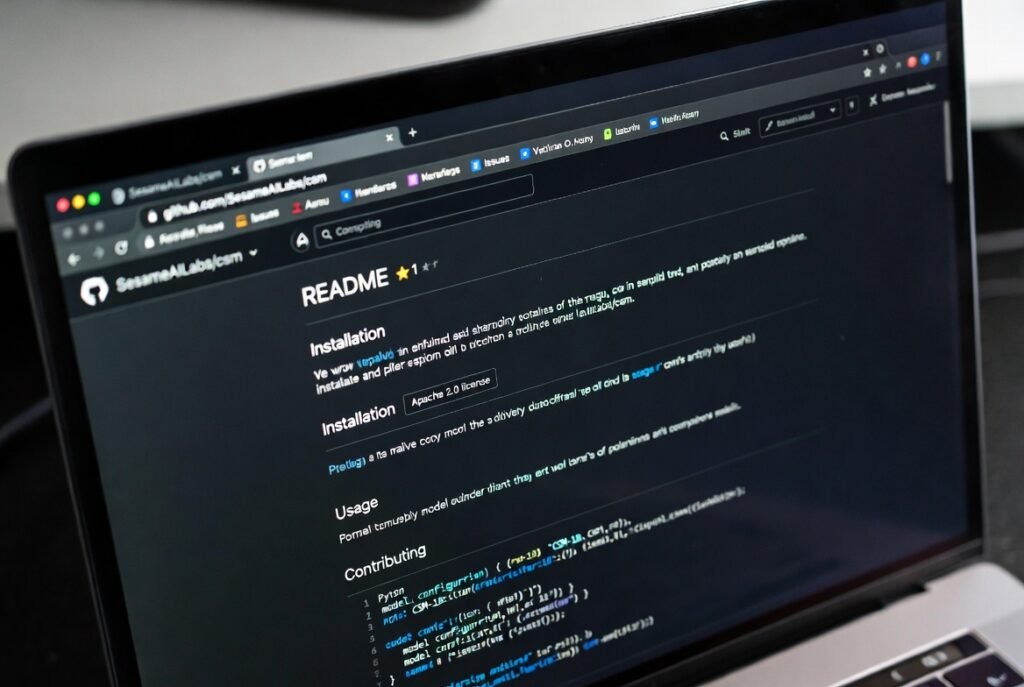

How to Use Sesame CSM: GitHub Tutorial and Local Setup

Ready to play with the tech yourself? Here’s the no-fluff Sesame CSM GitHub tutorial.

- Go to github.com/SesameAILabs/csm

- Clone the repo

- Install dependencies (Python 3.10+, CUDA GPU recommended)

- Log in to Hugging Face for the checkpoints

Install Sesame CSM 1B takes about 10 minutes on a decent machine. Once it’s running, you can feed it text plus previous audio context and watch it generate ultra-natural speech.

GitHub: https://github.com/SesameAILabs/csm

Hugging Face: https://huggingface.co/sesame/csm-1b (and native support in Transformers v4.52.1+)

Want to run Sesame CSM locally on your own laptop? Yes, it works great on an RTX 4090 or even mid-range cards with quantization. The README has copy-paste code examples that actually work—I tested them yesterday.

Sesame CSM Fine Tune and Advanced Customization

The base model is impressive, but the real fun starts when you Sesame CSM fine tune it. The official Sesame CSM fine-tuning guide on GitHub walks you through creating custom voices, adding accents, or training on your own podcast audio. Developers are already building everything from personalized reading assistants to game NPCs that actually sound alive.

Sesame AI Open Source: Why Developers Are Going Crazy

The Sesame AI open source release changed the game. Most big voice companies keep their models locked away. Sesame dropped the 1B model for free commercial use (with ethical rules against deepfakes and scams). That move alone earned massive respect in the community.

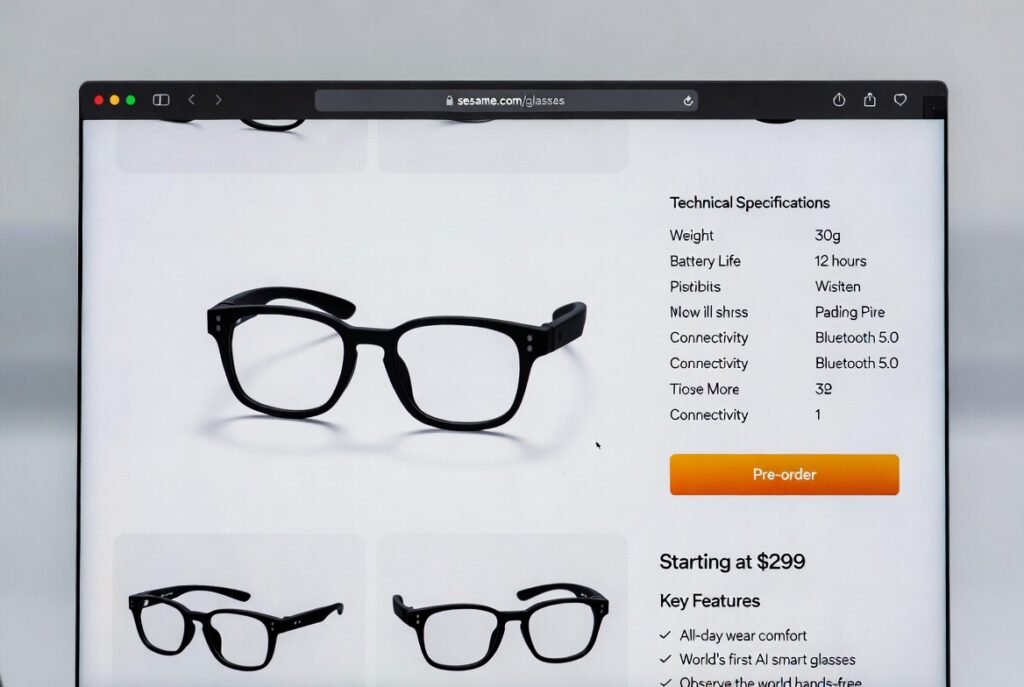

Sesame AI Glasses Release Date and the Smart Glasses Vision

Of course, voice is only half the story. Sesame is also building Sesame AI glasses—lightweight, all-day wearable eyewear with high-quality audio. The AI companion will literally “observe the world alongside you.”

As of February 2026, there’s still no official Sesame AI glasses release date. Prototypes have been shown, and industry whispers point to late 2026. But when they arrive, expect Sesame AI smart glasses to feel like having a super-smart friend sitting on your face. Fashion-forward design is a big focus so you’ll actually want to wear them.

Sesame AI vs ElevenLabs, OpenAI Voice, ChatGPT Advanced Voice & Grok Voice

Let’s get real with comparisons:

- Sesame AI vs ElevenLabs: ElevenLabs wins on voice cloning variety, but Sesame crushes conversational flow and context memory.

- Sesame AI vs OpenAI voice: OpenAI’s Advanced Voice is polished, yet Sesame feels warmer and more human in back-and-forth chats.

- Sesame CSM vs ChatGPT Advanced Voice: The open-source nature plus lower latency on long contexts gives Sesame the edge for power users.

- Sesame AI vs Grok voice: Grok is fun and witty, but Sesame’s emotional intelligence and natural breathing still win for everyday conversations.

How to Join the Sesame AI Beta Sign Up and Get API Access

Want early access? Go to sesame.com/beta right now for the Sesame AI beta sign up. The iOS TestFlight app gives Maya and Miles extra superpowers like searching and thinking on the fly.

Sesame AI API access is rolling out gradually for developers. Keep an eye on the GitHub for updates—once it’s live, building apps will get a whole lot easier.

Can You Make Money with Sesame AI in 2026?

Absolutely. Plenty of creators are already exploring make money with Sesame AI 2026 ideas: custom voice apps for language learning, podcast tools, game audio, therapy companions, and more. The open-source model lowers the barrier so indie devs can build and monetize fast.

Quick Note on “Seasame AI” Searches

If you typed seasame ai by mistake (it happens!), you’ll still land here. Google is smart enough to show the right results, but bookmark sesame.com to avoid the typo trap.

Frequently Asked Questions

What is the Sesame Conversational Speech Model exactly? It’s an end-to-end AI that generates natural speech while understanding full conversation context.

Is Sesame AI free to try? Yes—the research preview at app.sesame.com is free, and the 1B CSM model is completely open source.

When is the Sesame AI glasses release date? No confirmed date yet, but most analysts expect late 2026.

Can I run Sesame CSM locally without a powerful PC? With 8-bit quantization, yes—even on consumer GPUs.

Does Sesame AI support languages besides English? Mainly English right now, but the base model has some multilingual capability due to training data.

How does Sesame AI compare to other voice models in 2026? It leads in natural conversational flow and emotional expressiveness.

Is there a Sesame AI API access yet? Early developer access is opening; full public API coming soon.

Can I use Sesame for commercial projects? Yes, under Apache 2.0 with the ethical guidelines.

What’s the best way to start with Sesame CSM fine-tuning guide? Follow the official GitHub examples and start with a small dataset.

Will Sesame AI smart glasses work with the voice model? That’s the whole plan—seamless integration for hands-free use all day.